Can Companies Insure Against AI’s Growing Risks?

The Fletcher School, Tufts University

The Issue:

Artificial Intelligence promises to provide new capabilities that will replace less efficient methods of obtaining and acting on information for a wide range of commercial and personal uses. But artificial intelligence systems can create a variety of technical, legal and financial risks for the people and companies that use, develop, and maintain them. Lawsuits involving AI are proliferating in number and diversity given the increasing application of AI in many broad areas. These lawsuits raise complicated questions about who is responsible for these AI systems and who should pay for any resulting liability, as well as what role insurers should have in settling these costs.

Lawsuits involving AI raise questions about who is responsible for these systems and who should pay for any liability, as well as what role insurers should have in settling these costs.

The Facts:

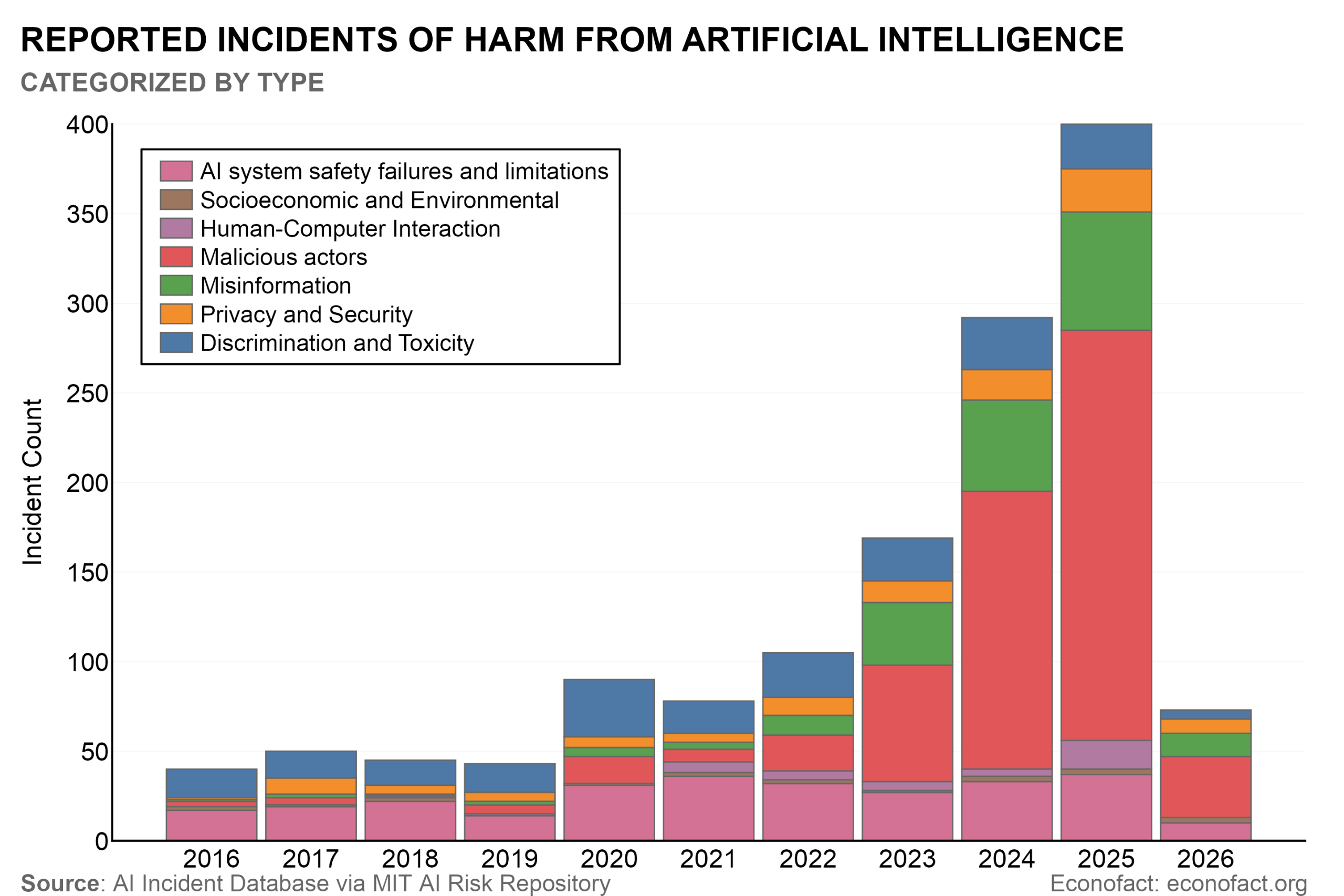

- Harms from AI systems are emerging and the systems have the potential for graver risks. AI has contributed to a wide range of real-world problems, from fines to law firms for citing in court non-existent cases generated by AI, to discrimination from resume-screening algorithms, to deepfakes that threaten individual privacy. The MIT AI risk repository offers one way to gauge the growing range of risks stemming from the rapidly evolving technology. The project has classified over 1700 different risks and tracks the annual number of AI-related incidents — which have been increasing over time (see chart). Many experts warn of possible profound and far-reaching safety risks from AI beyond those already being experienced, including the potential for AI to assist malicious actors in developing cyber, biological, or chemical weapons; to inflict physical harm through autonomous agents; and to drive large-scale financial fraud.

- AI systems are already giving rise to major legal claims and settlements related to intellectual property, safety, and security. Several high-profile lawsuits have alleged that the developers of prominent large language models (LLMs) violated copyright protections in scraping training data for their models from the Web. Anthropic agreed to a $1.5 billion settlement in 2025 to settle a copyright infringement lawsuit. The settlement — which is the largest copyright settlement in history — speaks to the stakes for these types of lawsuits against major AI companies because it cuts deeply at the core of what the companies do: an enormous amount of text is required for training the models. Although Anthropic settled, this did not resolve the underlying legal issue. Another significant IP case is pending against OpenAI and a class-action copyright infringement lawsuit against Meta was filed in May, 2026. In addition to intellectual property-related lawsuits and risks associated with scraping large-scale online training data sets, AI companies are beginning to be sued for a variety of other issues associated with their products and services. LLM companies have been charged with helping people commit suicide, or even murder, by failing to put in place appropriate safeguards. When 16-year-old Adam Raine committed suicide in April 2025, after a series of interactions about his mental health with OpenAI’s ChatGPT LLM, his parents sued OpenAI for building its product “with features intentionally designed to foster psychological dependency.” Also in 2025, a jury in Florida ruled that Tesla was partially responsible for a fatal car crash involving its autopilot autonomous driving software, awarding the victims more than $240 million in damages.

- The fact that this litigation is happening before the industry is profitable means that companies are trying to use venture funds and insurance to cover these costs (since they don’t have any other funds). Already, the largest AI companies are struggling to acquire as much insurance coverage as they would like to help shield them against the potential risks associated with their products. In 2025, the Financial Times reported that OpenAI had not been able to purchase as much insurance coverage for emerging AI risks as might be required to cover the costs of the litigation it could face. In light of this inability to acquire sufficient coverage, some AI companies have considered “self-insuring” by designating investor funds to help cover these costs. Anthropic is reportedly using some of its own funds to cover the $1.5 billion settlement, for instance, but it is unclear exactly how much of that they will pay for directly (see here).

- Insurers are often not confident in their ability to cover large AI-related losses, both because they do not have reliable data on these incidents that they can use to predict the risk, and because they fear that the costs associated with AI-related risks could be exceedingly high. Artificial intelligence is going to touch almost every type of risk that is currently insured. Many existing insurance policies, including cyber insurance and commercial general liability insurance, already cover many risks associated with AI. And while some insurers have tried to limit their exposure to AI risks by excluding coverage for certain types of AI-related incidents from their products, that may prove difficult in other cases where it is hard to prove whether AI was involved in, for instance, perpetrating a cyberattack or writing and delivering malware to a victim.

- At the same time, some insurers are seizing the opportunity to develop and market new AI-specific insurance coverage to companies worried about their risk exposure in this area. While most of these AI policies are relatively limited in what they cover and apply only to specific types of risks — including many risks that may have already been covered under existing lines of insurance — they hint at the possible emergence of a new market for standalone AI insurance that could shift more of these costs onto the insurance carriers willing to bear them.

- The challenges of insuring against AI risks are in some ways to be expected in the face of rapidly emerging technology. There are parallels with the recent evolution of cyber insurance. The emergence of computing technology and the internet permeated every sector of the economy. With this came initially unanticipated risks ranging from fraud, to ransomware and extortion, to denial-of-service attacks, and data breaches. Insulating companies from cyber risks has been challenging because insurers have lacked reliable historical data to model cyber losses, which is compounded by the rapid evolution of cyber risks. Moreover, assessment and mitigation of cyber risk can demand deep technical expertise and, in many cases, intrusive access to firms’ internal systems. Nonetheless, over time, as the number of cyber incidents increased and there was a little more data, insurance began to become available with greater ability to resolve some of the liability claims and make better recommendations about ways to safeguard these systems. Regulation also played a more active role following a very visible cyberattack on a major U.S. oil pipeline. Similar to cybersecurity, historical data on AI harms and liability will often provide a weak foundation for modeling the most important future risks given the rapid pace of technological change, the wide diffusion of the technology and the lack of standardized data collection and disclosure. Moreover, AI likely poses more formidable risks given the greater complexity of the technology and the speed with which it is evolving. Nonetheless, regulations to promote better information and disclosure regarding AI risks and events could expand the domains in which insurers can price and manage safety risks.

What this Means:

As AI is used for a wider range of activities and decisions, lawsuits will become increasingly common and diverse, attempting to hold AI companies, operators, and developers, responsible for a growing number of potential risks associated with their products. These types of lawsuits and the sizable settlements and damages associated with them will have profound consequences for who develops AI systems and how. One crucial factor will be what role insurance companies play in covering these costs and whether companies can continue to shift most of their liability risk to their insurers. These risks are already resulting in litigation and landmark lawsuits and settlements that have the potential to bankrupt many companies if there are not significant risk-sharing mechanisms in place, such as insurance. But it is not yet clear whether insurers will embrace having a role in managing AI risks and, if so, which risks they will be willing to cover and which they may view as fundamentally too large or unpredictable to insure.

Like what you’re reading? Subscribe to EconoFact Premium for exclusive additional content, and invitations to Q&A’s with leading economists.